MCP docs

Choose the right ctx| MCP install path for individuals, teams, and clients that need manual configuration.

ctx| exposes an organization-scoped remote MCP server at:

https://app.ctxpipe.ai/mcp?orgSlug=your-orgFor self-hosted deployments, keep the same /mcp?orgSlug=... path and swap in your own base URL.

Choose Your Install Path

There are three practical ways to install ctx| MCP:

- Individual install with the CLI - best for a developer setting up their own agent clients on one machine.

- Raise pull requests - best for enabling a team by committing MCP config files into shared repositories.

- Manual config - useful when you need to paste JSON yourself or use a client the CLI does not fully automate.

The CLI and PR flow both write configuration. They do not remove the need for MCP authorization. When a client first uses ctx| MCP, it should still redirect you to ctx| so you can sign in and authorize access.

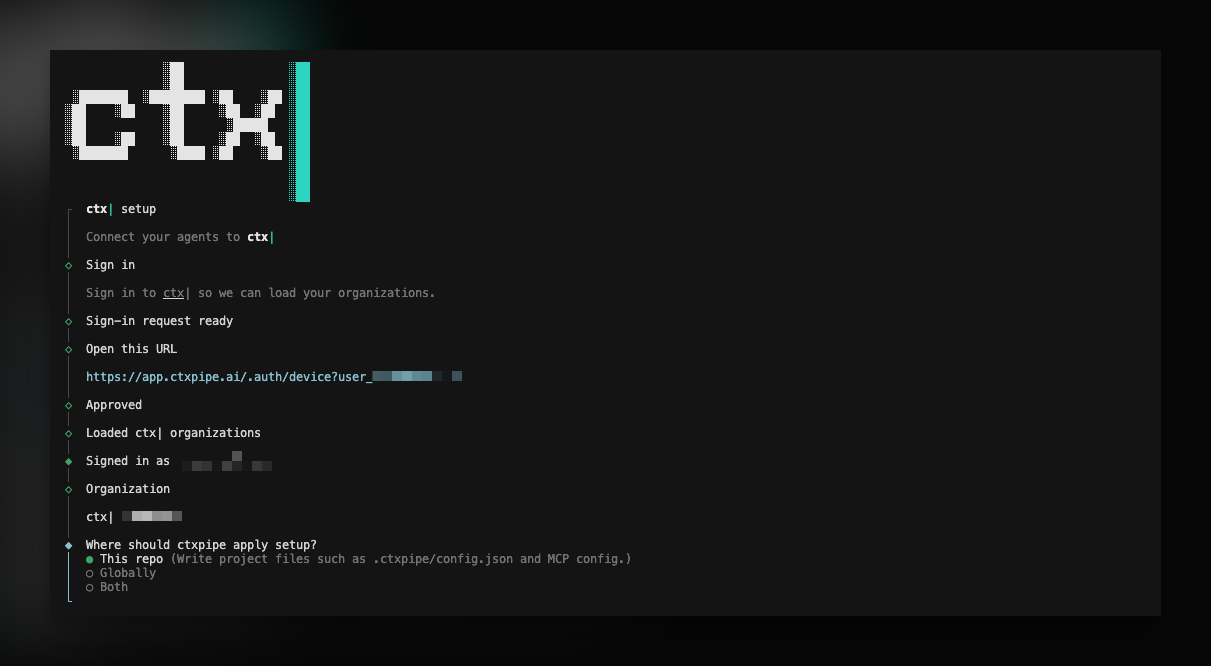

CLI installer (npx ctxpipe)

The CLI is the recommended path for an individual developer. It detects common agent clients, writes the right config shape for each one, and lets you choose whether the config belongs to the current repo, your user profile, or both.

From any repo:

npx ctxpipe init

That runs an interactive wizard for:

- signing in for setup,

- selecting your organization,

- choosing repo, user, or both scopes,

- selecting agent clients,

- reviewing the planned file changes before writing.

For MCP-only setup, use:

npx ctxpipe mcp addFor scripts and CI, use explicit flags:

npx ctxpipe init --org acme --agents cursor,claude --scope repo --yes

npx ctxpipe mcp add --org acme --client opencode --scope user --yes

npx ctxpipe doctor --jsonUse npx ctxpipe init --help and npx ctxpipe mcp add --help for the full flag list, including --base-url, --org, --scope, --agents, --client, --yes, --json, and --dry-run.

Setup sign-in is separate from MCP authorization. The CLI signs you in so it can list organizations and write correct config. It stores setup credentials in the OS keychain when available, with a file fallback under ~/.config/ctxpipe/ when keyring access is not possible.

Supported CLI clients:

- Cursor - repo config in

.cursor/mcp.json, user config in~/.cursor/mcp.json. - Claude Code - repo config in

.mcp.json; user setup usesclaude mcp addwhen the Claude CLI is available. - OpenCode - repo config in

opencode.json, user config in~/.config/opencode/opencode.json. - VS Code / Copilot - repo config in

.vscode/mcp.json; user setup provides the VS Code install link. - Codex - user setup uses

codex mcp addwhen available; otherwise the CLI prints the command to run.

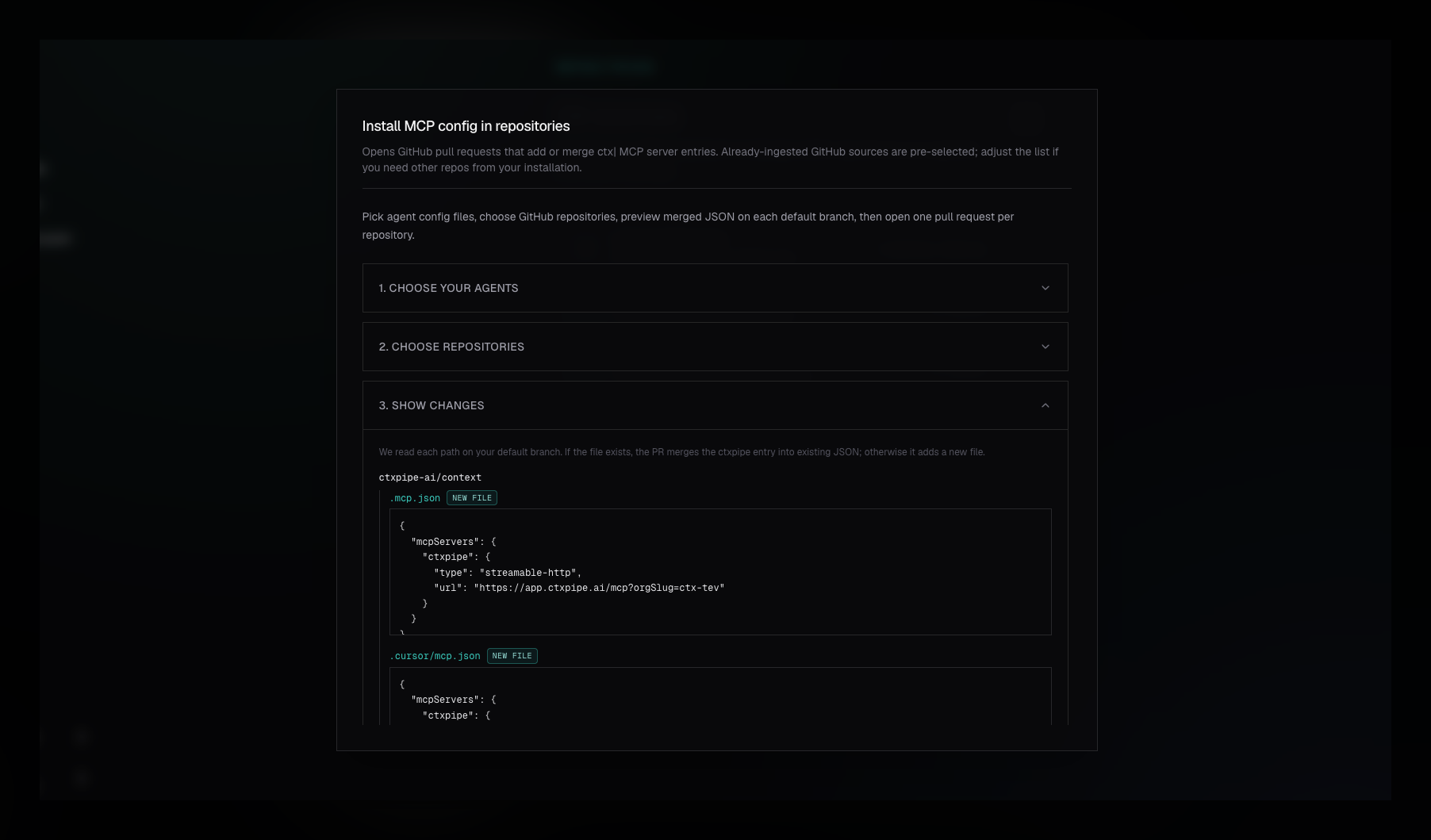

Team Setup Via Pull Requests

For a team, committing MCP config files into repositories is usually better than asking every developer to hand-edit local files. ctx| can raise GitHub pull requests that add or merge the right config files for selected repos.

Use this path when:

- your team wants a reviewed, repeatable MCP rollout,

- config should travel with the repository,

- admins want to enable Cursor, Claude Code, or OpenCode for multiple repos at once.

The PR workflow currently supports:

- Cursor via

.cursor/mcp.json, - Claude Code via

.mcp.json, - OpenCode via

opencode.json.

It does not currently raise PRs for Codex or VS Code config. Use the CLI for those clients.

To use the PR workflow, open Repositories, choose Install MCP via PRs, select agent config formats and repositories, review the JSON preview, then raise the PRs. Each repository gets its own branch and pull request.

You must have a connected GitHub App installation, the target repositories must be visible to that installation, and your ctx| user must be an organization admin or owner.

Read the full team rollout guide: Install MCPs via PR.

Manual Configuration

Manual config is the fallback when you do not want to use the CLI, the client is managed elsewhere, or you need to inspect exactly what will be written.

Your MCP client should redirect you to ctx| to sign in and authorize access. After approval, it returns to the client and the server is ready to use.

No bearer token setup is required when your client supports OAuth redirect for MCP.

Client-Specific Configuration

Different clients do not use exactly the same JSON shape. That is the main reason the CLI exists.

Cursor

Config file: .cursor/mcp.json at the root of your project, or ~/.cursor/mcp.json for user-level config.

{

"mcpServers": {

"ctxpipe": {

"type": "streamable-http",

"url": "https://app.ctxpipe.ai/mcp?orgSlug=your-org"

}

}

}Claude Code

Project config file: .mcp.json.

{

"mcpServers": {

"ctxpipe": {

"type": "streamable-http",

"url": "https://app.ctxpipe.ai/mcp?orgSlug=your-org"

}

}

}For user-level setup, prefer npx ctxpipe mcp add --client claude --scope user or the Claude CLI's claude mcp add command.

OpenCode

Config file: opencode.json at the repo root, or ~/.config/opencode/opencode.json for user-level config.

{

"mcp": {

"ctxpipe": {

"type": "remote",

"url": "https://app.ctxpipe.ai/mcp?orgSlug=your-org",

"enabled": true

}

}

}VS Code / Copilot

Repo config file: .vscode/mcp.json.

{

"servers": {

"ctxpipe": {

"type": "http",

"url": "https://app.ctxpipe.ai/mcp?orgSlug=your-org"

}

}

}For user-level setup, use the install link printed by npx ctxpipe mcp add --client vscode --scope user.

Codex

Use the Codex MCP command:

codex mcp add ctxpipe --url "https://app.ctxpipe.ai/mcp?orgSlug=your-org"The CLI prints this for you when it cannot run it directly.

Other clients

If your client's docs only mention http or SSE, check the current client documentation. New remote MCP integrations generally use Streamable HTTP, but client config keys still vary.

Troubleshooting

- CLI sign-in worked, but the MCP client still asks for authorization - expected. CLI setup auth and MCP client OAuth are separate flows.

- Connection fails after pasting URL only - add the client-specific

typefield. Most clients do not infer the transport reliably. - OpenCode shows the server but it never connects - use the top-level

mcp.ctxpipeshape withtype: "remote"andenabled: true. streamable-httpis not recognized - upgrade the client, or check whether that client expectshttpfor its config key while still using a Streamable HTTP remote server.- Team PR flow is unavailable - confirm GitHub is connected, the repositories are visible to the GitHub App installation, and your ctx| account is an organization admin or owner.

- Your client cannot complete OAuth - use a client with remote MCP OAuth support. The hosted docs assume OAuth-based MCP access.

Notes

orgSlugis required. Replaceyour-orgwith your organization slug from ctx|.- Do not commit secrets into repository MCP config.

- For team-wide repo config, prefer Install MCPs via PR over asking every developer to copy JSON by hand.

Related

- Managing your organization - org slug and membership

- Install MCPs via PR - team rollout through GitHub pull requests